Everyone wants their AI to be “more accurate.” But when you ask them how, you usually get one of two answers: “better prompts” or “bigger model.”

Both miss the point.

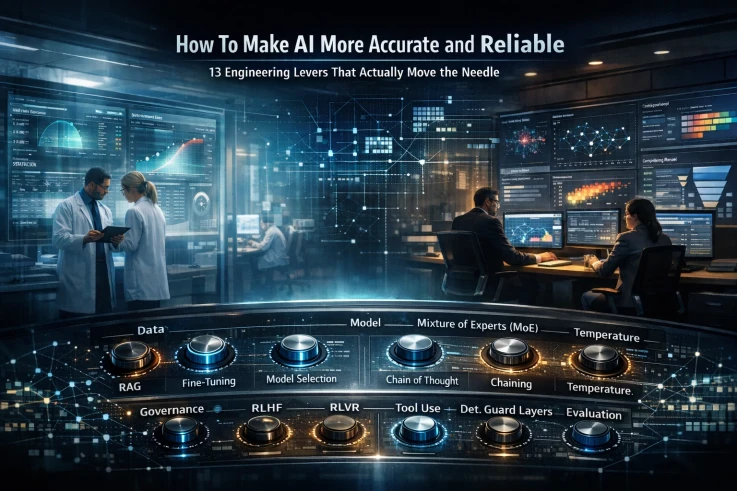

Accuracy isn’t a single knob you turn. It’s the result of decisions across your entire stack — from the data you feed the model, to the model you choose, to how you constrain its output after it generates a response. Miss any layer and you’re optimizing vibes instead of metrics.

Here’s the framework I use when building AI systems that actually need to be right.

The Four Layers of AI Accuracy

Before we get into the individual techniques, here’s the mental model that ties everything together. Improving accuracy happens at four levels:

Data — what the model knows (RAG, fine-tuning)

Model — what the model is (selection, architecture, MoE)

Inference Strategy — how the model thinks (Chain of Thought, chaining, temperature)

Governance — what catches the model’s mistakes (RLVR, guardrails, validators)

Principal-engineer-level systems combine all four. Most teams stop at one or two and wonder why their system hallucinates in production.

Layer 1: Give the Model Better Information

RAG (Retrieval-Augmented Generation)

RAG attaches a search layer to your model so it can pull relevant documents before answering. Instead of relying purely on what the model memorized during training, you ground it in real, retrievable sources.

This is a massive win for domain-specific systems — think fraud detection, clinical decision support, legal research, or anything where the knowledge base changes frequently. It reduces hallucinations and injects current data that the model wouldn’t otherwise have access to.

But here’s the catch: RAG is only as good as your retrieval pipeline. Bad embeddings, poor chunking strategies, low-quality source documents, or a retriever that returns irrelevant junk will actively make things worse. You’ll get confident answers grounded in the wrong context, which is arguably more dangerous than a hallucination you can spot.

RAG increases factual grounding, not reasoning ability. Don’t confuse the two.

Fine-Tuning

If RAG gives the model access to better information at inference time, fine-tuning bakes it into the model’s weights. Supervised fine-tuning on domain data, structured outputs, or task-specific examples can have a massive impact — especially for narrow, well-defined tasks in enterprise systems.

The tradeoff is cost and maintenance. Fine-tuning requires curated data, compute, and ongoing evaluation as your domain shifts. But for production systems where you need consistent, specialized behavior, it’s one of the highest-ROI investments you can make.

Layer 2: Choose the Right Model

Model Selection (LLM vs. SLM)

This sounds obvious, but I see teams get it wrong constantly. A 70-billion-parameter reasoning model summarizing a CSV is wasteful. A tiny small language model doing medical reasoning is dangerous. Accuracy improves when you match model capacity to task complexity.

Large language models give you broad reasoning, strong abstraction, and the ability to handle ambiguity — but they’re expensive and sometimes overconfident. Small language models are faster, cheaper, and more controllable, but they have narrower capabilities.

Beyond size, you also have choices between fine-tuned vs. base models, instruction-tuned vs. reasoning-tuned architectures, and domain-specific variants. Each of these choices affects what kind of accuracy you get.

Mixture of Experts (MoE)

Instead of one monolithic model where every parameter fires on every token, MoE architectures route each token to specialized expert subnetworks. This gives you larger effective capacity with better scaling efficiency.

MoE is more of an architectural decision than something you’ll configure yourself (unless you’re training from scratch), but it’s worth understanding because many of the most capable models today use this approach under the hood. Just know that MoE improves scaling efficiency — it doesn’t automatically fix hallucination or reasoning flaws.

Layer 3: Improve How the Model Thinks

Chain of Thought (CoT)

Reasoning improves when the model externalizes intermediate steps. It’s that simple — and that powerful.

Zero-shot CoT is as easy as adding “think step by step” to your prompt. Few-shot CoT means providing worked examples of reasoning. Structured reasoning forces the model to output its thinking in a specific format (like JSON steps) so you can inspect and validate each stage.

The warning: more tokens doesn’t equal more intelligence. Without constraints, you can get verbose, meandering garbage that looks like reasoning but isn’t. CoT needs guardrails to be useful.

LLM Chaining

Instead of asking a model to get everything right in a single forward pass, you build a workflow. Draft → Critic → Revise. Generator → Verifier. Planner → Executor → Validator.

This simulates iterative thinking, and it reduces first-pass mistakes by giving the system multiple opportunities to catch errors. In my experience, this is often more powerful than scaling model size. A well-designed chain of smaller models will frequently outperform a single large model doing everything at once.

Temperature

Temperature controls randomness in the model’s output distribution. Low temperature gives you more deterministic, stable responses — better for structured output and factual tasks. High temperature gives you more variation and creativity, but with higher hallucination risk.

For accuracy-critical work? Use low temperature. But be clear about what you’re doing: temperature doesn’t make the model smarter. It changes the sampling strategy. A wrong answer at temperature 0 is still a wrong answer.

Layer 4: Control and Verify Model Behavior

This is where things get serious — and where most teams underinvest.

System Prompts and Guardrails

Models default to confidence. They’ll give you a polished, authoritative answer even when they’re uncertain. Your job is to constrain that behavior.

Effective system prompts include instructions like “cite your sources,” “if unsure, say you don’t know,” and “only answer using retrieved context.” These shape tone, risk tolerance, and allowed reasoning behavior. They’re essential for any production system.

But let’s be honest: prompts are not magic. They guide behavior — they don’t add knowledge or capability. A well-prompted bad model is still a bad model.

RLHF (Reinforcement Learning from Human Feedback)

RLHF is how most modern models learn to be helpful, harmless, and aligned with human preferences. Human raters score outputs, and the model is trained to prefer higher-scored responses. It improves helpfulness, politeness, safety, and general alignment.

What it does not necessarily improve: mathematical correctness, deep logical reasoning, or factual accuracy. RLHF aligns behavior with human preferences. Those preferences don’t always correlate with truth.

RLVR (Reinforcement Learning with Verifiable Rewards)

This is where the game changes. Instead of “humans liked this response,” RLVR uses objective reward functions. Did the code compile? Is the math solution correct? Were the policy constraints satisfied?

You’re rewarding correctness, not vibes. This is much closer to improving true reliability, and it’s one of the most promising directions in making AI systems you can actually trust for high-stakes decisions.

Tool Use and Function Calling

Here’s a simple rule: stop asking the model to do things it’s bad at.

LLMs are terrible at arithmetic. They’re unreliable at database queries. They can’t check real-time data from memory. So let them call a calculator, query a database, or hit an API.

Accuracy skyrockets when you stop asking the model to do math in its head and give it a calculator instead. This isn’t a workaround — it’s good architecture.

Deterministic Guard Layers

This might be my favorite category, because it’s the most underrated.

Schema validators. Policy engines. Rule-based filters. Confidence thresholds. The pattern is simple: the LLM generates, then a deterministic system verifies.

This is how you build AI for regulated environments. Not by trusting the model’s confidence scores, but by wrapping it in layers of programmatic verification that catch errors before they reach the user.

Evaluation (The Hidden Multiplier)

You can’t improve what you don’t measure. And yet most teams “evaluate” their AI by running a few prompts and seeing if the output looks right.

Real evaluation includes custom eval sets, edge case testing, adversarial prompts, and distribution shift testing. It means building a pipeline that quantifies accuracy across the scenarios that actually matter for your use case.

Most systems fail here. They optimize for demo quality instead of production reliability. Don’t be that team.

Putting It All Together

There’s no single technique that makes AI accurate. There’s a stack of decisions, each one compounding on the others.

The teams that build reliable AI systems aren’t the ones chasing the biggest model or the cleverest prompt. They’re the ones that think across all four layers — data, model, inference, and governance — and make deliberate choices at each level.

That’s not a framework you’ll find in a single API call. It’s systems thinking. And it’s the difference between a demo that impresses and a product that works.