You shipped a chatbot. The demo looked great. Then real users started sending edge cases, and suddenly your “intelligent” assistant is hallucinating product features that don’t exist. Sound familiar?

The gap between a working prototype and a production-grade AI system almost always comes down to one thing: evaluation. Without a structured way to measure quality, you’re flying blind—unable to tell whether your latest prompt tweak made things better, worse, or simply different. This article breaks down the evaluation landscape as it stands in early 2026, covering everything from public model benchmarks to the emerging discipline of testing agentic workflows.

Why Evaluation Is the Bottleneck Nobody Talks About

AI teams spend enormous energy on prompt engineering, fine-tuning, and architecture decisions. But without evals, every change is a guess. You have no way of knowing whether a model swap improved accuracy, whether a new system prompt reduced hallucinations, or whether your RAG pipeline is actually retrieving relevant context.

The challenge is that LLM outputs are non-deterministic and subjective. The same prompt can produce different responses across runs. Quality depends on context, user intent, and domain-specific requirements. Traditional software testing—checking for exact matches or return codes—simply doesn’t apply. You need evaluation methods designed for the unique failure modes of generative AI: hallucinations, instruction drift, inconsistent reasoning, and tool-call errors.

Public Benchmarks: The Starting Line, Not the Finish

If you follow the AI space at all, you’ve seen leaderboard scores thrown around as proof of model superiority. These public benchmarks serve a real purpose—they give us standardized, comparable measurements across models. But they are a starting point for model selection, not a replacement for testing your own system.

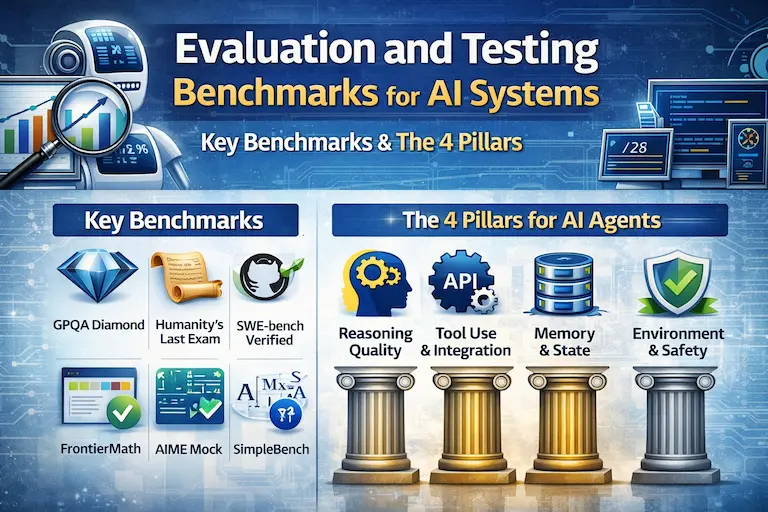

Key Benchmarks Worth Knowing in 2026

- GPQA Diamond – Graduate-level science questions requiring deep reasoning across physics, chemistry, and biology.

- Humanity’s Last Exam (HLE) – Over 2,500 expert-crafted questions spanning math, humanities, and natural sciences. No frontier model comes close to saturating it.

- SWE-bench Verified – Tests whether a model can resolve real GitHub issues end-to-end. This is the gold standard for measuring real-world coding ability.

- FrontierMath – Hundreds of unpublished expert-level math problems ranging from undergraduate to research level. Recently expanded to include unsolved open problems.

- AIME (Mock) – Competition-style math problems harder than MATH Level 5, used to differentiate top reasoning models.

- SimpleBench – Trick questions requiring common-sense reasoning rather than memorized facts. A good test for whether a model can avoid being misled.

Organizations like Epoch AI, Artificial Analysis, and Scale AI maintain independent benchmark suites and leaderboards that track frontier model performance over time. The Artificial Analysis Intelligence Index (v4.0) now incorporates ten evaluations, and Epoch’s Capabilities Index recently added APEX-Agents and ARC-AGI-2 to capture the shift toward agentic performance.

The takeaway: benchmarks tell you what a model can do in controlled conditions. They do not tell you whether it will perform well on your customer support tickets, your internal documents, or your specific domain.

Custom Evals: Where the Real Work Happens

Every production AI system needs its own evaluation pipeline. Public benchmarks use standardized datasets and metrics—your system has unique data, unique prompts, and unique definitions of “good.” Building custom evals is the single highest-leverage activity for any team shipping LLM-powered products.

The Three Pillars of a Custom Eval Pipeline

- Datasets – Curated test cases that represent real usage. Start with production logs and edge cases you’ve already encountered. Supplement with synthetic data for coverage. Version your datasets and grow them over time as you discover new failure modes.

- Metrics – Quantitative scores tied to what matters for your use case. For a customer support bot, that might be answer accuracy, tone, and escalation appropriateness. For a code generation tool, it’s whether the code compiles and passes tests. Keep it to three to five metrics—more than that and you’ll lose focus.

- Methodology – How you run evals and act on results. This includes handling non-determinism (run prompts multiple times and average), testing prompt sensitivity (inject noise and measure stability), and building evals into your CI/CD pipeline so regressions are caught before deployment.

Scoring Methods: Pick the Right Tool

Not all metrics require the same evaluation approach. The practical pattern is to layer methods based on what you’re measuring:

- Code-based checks for deterministic validation: format compliance, JSON schema conformance, length constraints, required fields. Fast, free, reproducible.

- LLM-as-a-Judge for nuanced quality dimensions: tone, helpfulness, factual accuracy, coherence. You prompt a capable model to score outputs against a rubric. Research shows this approach achieves the highest correlation with human judgment.

- Human evaluation for ground-truth calibration and safety-critical decisions. Expensive and slow, but essential for validating that your automated evals are actually measuring the right things.

A strong evaluation pipeline uses all three. Code checks catch obvious failures, LLM judges handle scale, and human review keeps the whole system honest.

Evaluating Agentic Systems: A Different Beast

Here’s where things get genuinely hard. An AI agent isn’t just answering a question—it’s planning, selecting tools, calling APIs, handling errors, and adapting its strategy across multiple steps. Evaluating a single model response is difficult enough. Evaluating an entire autonomous workflow requires fundamentally different approaches.

Why Traditional Evals Fall Short for Agents

Standard LLM evaluation treats the system as a black box: input goes in, output comes out, you score the output. But with agents, the output alone doesn’t tell you enough. Two agents can reach the same correct answer through wildly different reasoning paths—one efficient, one wasteful. Or an agent might produce the right final answer but make a dangerous tool call along the way. You need visibility into the full execution trace, not just the endpoint.

The Four Evaluation Pillars for Agents

Recent frameworks from Amazon, McKinsey’s QuantumBlack, and academic researchers converge on evaluating agents across four dimensions:

- Reasoning Quality – Does the agent break down the goal into a sensible plan? Does it follow that plan, or drift? Metrics like PlanQuality and PlanAdherence evaluate whether the agent creates good strategies and sticks to them.

- Tool Use & Integration – Does the agent call the right tools with correct parameters? Does it handle API failures, timeouts, and unexpected responses gracefully? Tool-call hallucination—generating plausible but incorrect API payloads—is a common and dangerous failure mode.

- Memory & State – Does the agent maintain accurate context across steps? Does it retrieve the right information from long-term memory without missing decision-relevant details? The precision-recall tradeoff in memory retrieval is an active research area.

- Environment & Safety – Does the agent operate within defined guardrails? Does it avoid actions that corrupt system state or violate access controls? Production agents need to demonstrate consistent error recovery patterns.

Agent-Specific Benchmarks

- GAIA – A broad benchmark requiring multi-step reasoning, tool use, and web browsing. One of the primary independent yardsticks for general agent capability.

- SWE-bench – Also applicable to agents, testing whether they can navigate codebases, write code, and resolve issues autonomously.

- AgentBench – Evaluates LLM-as-agent performance across eight distinct environments in multi-turn settings.

- CUB (Computer Use Benchmark) – Tests whether agents can complete realistic multi-step tasks by navigating GUIs, clicking buttons, and managing files.

- METR’s Time Horizon metric – Measures the human task duration at which an AI model reaches 50% success, spanning ML research engineering and general software tasks.

The Eval Tooling Landscape

You don’t have to build everything from scratch. The evaluation tooling ecosystem has matured significantly, with platforms now covering the full lifecycle from offline testing to production monitoring.

- DeepEval – A Python-first framework (similar to Pytest) with pre-built metrics for RAG evaluation, agent reasoning, and plan adherence. Open-source with a cloud platform for team collaboration.

- Evidently AI – Open-source library (25M+ downloads) supporting LLM judges, agent simulations, and synthetic test generation. Strong for RAG and agent evaluation workflows.

- Braintrust – Combines dataset management, scoring, tracing, and CI/CD integration. Production monitoring surfaces failures that can be added to regression test sets with one click.

- LangSmith (LangChain) – Evaluation modules integrated with the LangChain ecosystem. Useful if you’re already building with LangGraph or LangChain agents.

- Maxim AI, Arize, Comet Opik – Enterprise-grade platforms offering simulation, real-time observability, drift detection, and human-in-the-loop workflows.

The key criterion for choosing a platform: it should support multiple eval methods (code checks, LLM judges, and human review), handle both offline testing and production monitoring, and let you define custom metrics specific to your use case. Avoid tools that only offer generic metrics—your eval criteria need to match your product, not someone else’s benchmark.

A Practical Checklist for Getting Started

If you’re building AI products and don’t have an eval pipeline yet, here’s the minimum viable approach:

- Define what “good” means for your specific use case. Write it down. Be concrete.

- Build a golden dataset of 50–100 test cases from real production data and known edge cases.

- Pick three metrics that capture the quality dimensions your users care about most.

- Set up offline evals that run before every deployment. Treat them like unit tests—nothing ships without passing.

- Add production monitoring that samples live traffic and scores it. When you find failures, add them to your test dataset.

- For agents: trace the full execution path, not just the final output. Evaluate reasoning, tool use, and error recovery separately.

Start simple. A focused eval suite that grows with your product will outperform an elaborate framework that nobody maintains.

Final Thoughts

Benchmarks are useful for picking a base model. Custom evals are what make your product reliable. And as we move deeper into the era of autonomous agents, evaluation is shifting from “score the output” to “understand the entire decision-making process.”

The teams that ship reliable AI products in 2026 won’t be the ones with the highest benchmark scores. They’ll be the ones with the most rigorous, continuously improving evaluation pipelines—the ones who treat evals not as a checkbox, but as a core engineering discipline.