Turning Messy Patient Charts Into Clear, Trustworthy Summaries

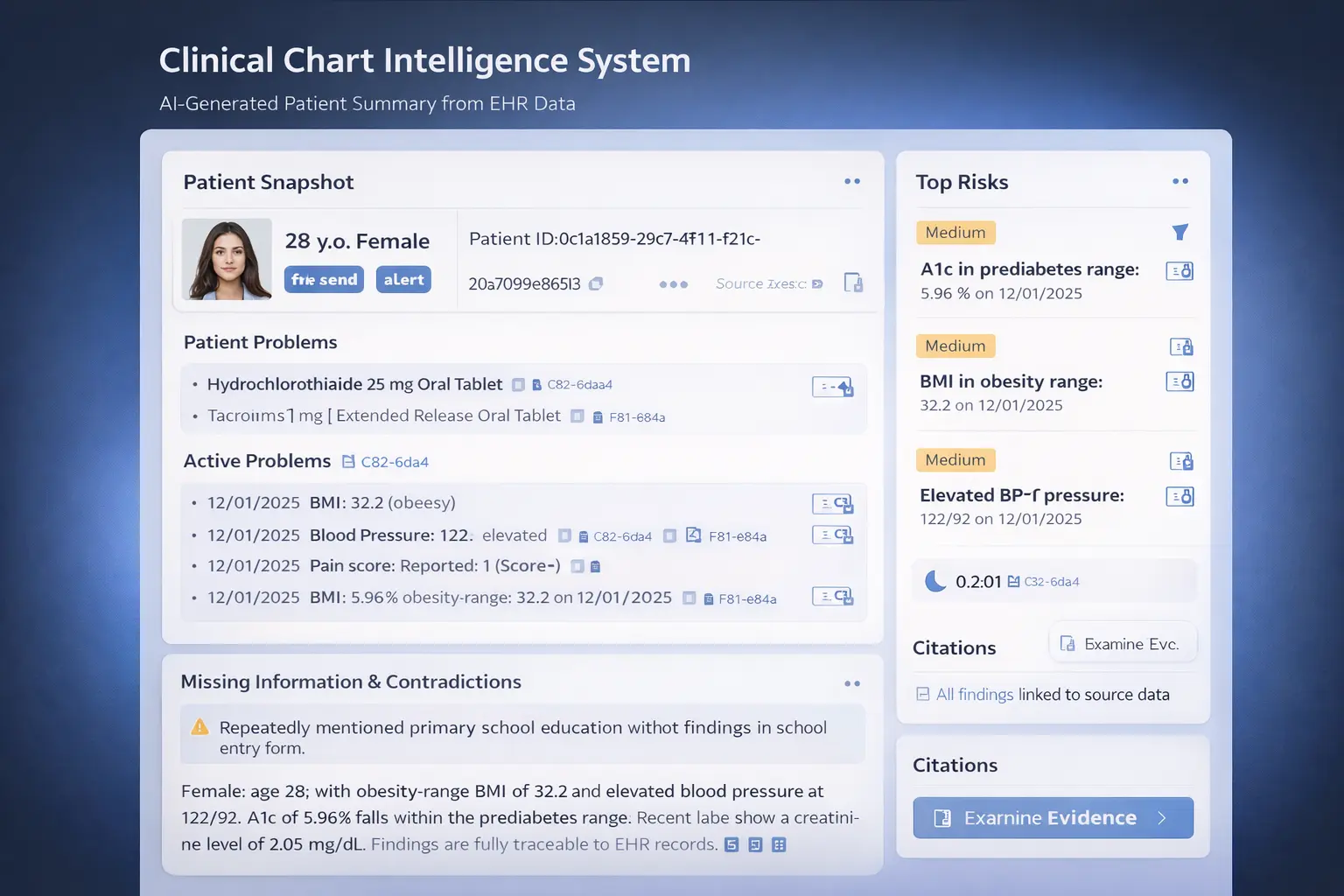

An AI system that reads through an entire patient medical record — labs, medications, notes, vitals — and produces a clinician-ready summary that flags risks, spots gaps, and cites every claim.

THE PROBLEM

Patient charts are a mess — and lives depend on reading them correctly

A single patient’s medical record can contain hundreds of entries across lab results, medication lists, clinical notes, and vital signs — often spread across years and written by different providers. Clinicians, care managers, and reviewers spend enormous time manually piecing these together, often under pressure.

Critical information gets buried. Contradictions go unnoticed. Risk signals are missed.

Information Overload

Hundreds of entries per patient — labs, meds, notes, vitals — with no unified view.

Hidden Contradictions

Different parts of the chart may disagree with each other, and nobody catches it.

Clinician Burnout

Manual chart review is tedious, time-consuming, and a major contributor to burnout.

THE SOLUTION

An AI system that reads the chart, flags what matters, and shows its work

I built a multi-agent system that takes a raw patient record and produces a complete, clinician-style analysis — summarizing what’s important, flagging risks, identifying gaps, and citing every single claim back to the original data.

01

Patient Snapshot

A clear overview of demographics, active problems, medications, and key lab/vital values — everything a clinician needs at a glance.

02

Risk Flags

Automatic detection of concerning patterns like abnormal lab trends, elevated blood pressure, or prediabetes-range values — each with a plain-language explanation.

03

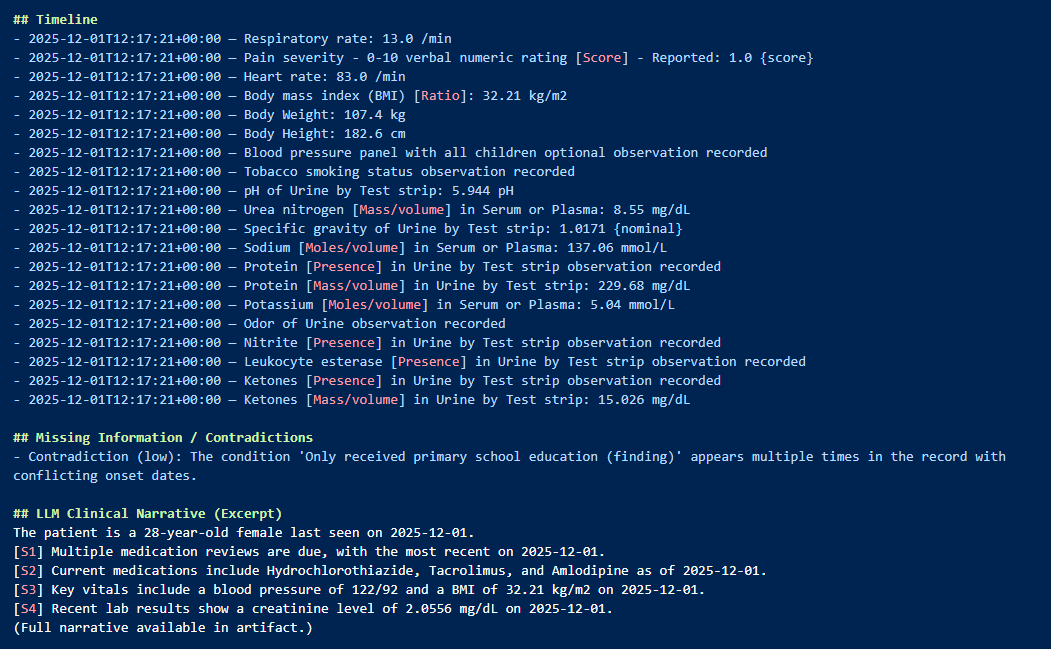

Missing Info & Contradictions

The system spots what’s missing or inconsistent — documentation gaps, conflicting entries, and unresolved references across the chart.

04

Full Citations

Every claim in the output links back to its original source record. Nothing is invented. Everything is traceable and auditable.

HOW IT WORKS

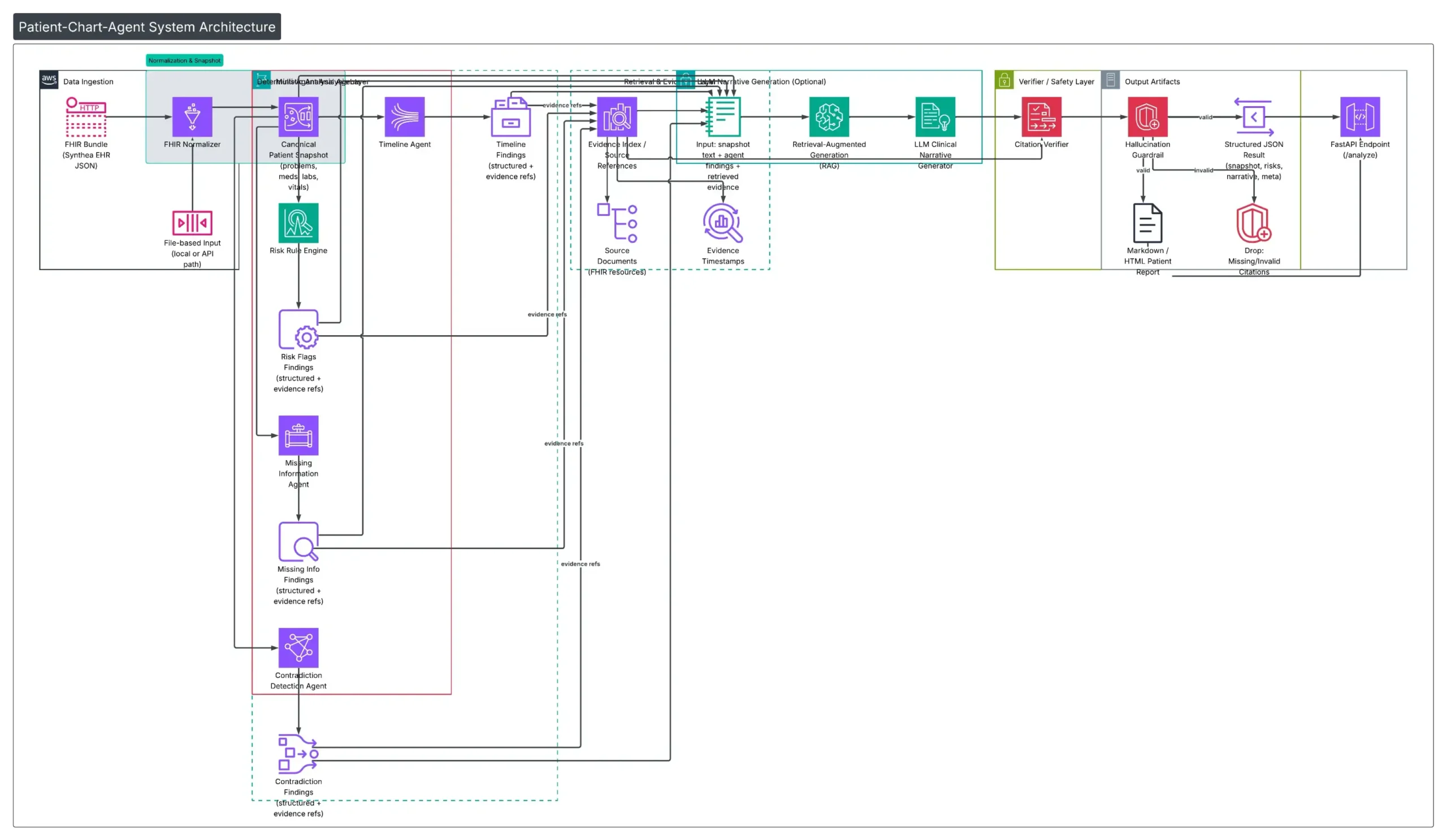

Six specialized agents, each with one job

Rather than one monolithic AI, the system uses a team of focused agents — each responsible for a specific task. This makes the system explainable, testable, and safe.

SEE IT IN ACTION

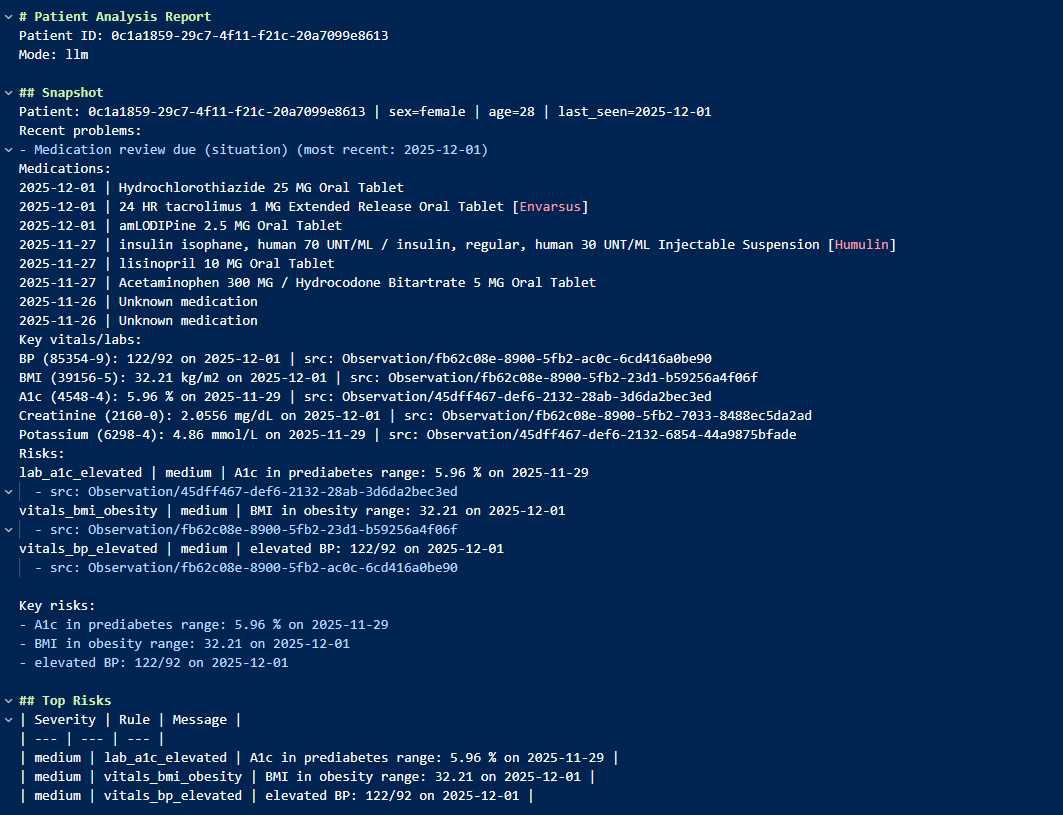

From raw patient data to clear, cited analysis

Here’s an example: a synthetic patient record is fed into the system. Seconds later, the output is a structured report that any clinician could immediately use.

Patient Summary & Risk Flags

The system identifies obesity-range BMI, elevated blood pressure, and prediabetes-range A1c — all with severity levels and source citations.

ENGINEERING DECISIONS

Why I built it this way

Multi-agent over monolithic

Instead of one big AI prompt, each agent has a single responsibility. This makes the system testable, debuggable, and auditable — critical in healthcare where you need to explain every output.

Deterministic first, LLM optional

The core analysis runs without any AI model. The LLM layer only adds a narrative summary — and only from verified data. If the AI fails, the system still works.

Citation-first output discipline

Every claim must link to a source record. If a statement can't be cited, it gets removed. This is the design principle that makes the system safe for clinical use.

Synthetic data from day one

All development uses Synthea-generated patient data, eliminating any privacy concerns while still providing realistic clinical complexity.

BY THE NUMBERS

Impact at a glance

6

Specialized AI agents working as a coordinated team

100

Synthetic patient records analyzed with full traceability

100%

Citation coverage — every claim links to source evidence

TECH STACK

Built with

REFLECTIONS

What I learned building this

Safety must be designed in, not bolted on

In healthcare AI, “fail safely” isn’t a feature — it’s the architecture. Every design choice was filtered through: “What happens when this goes wrong?”

Deterministic + AI is more powerful than AI alone

The hybrid approach — rule-based analysis as the foundation, LLM as an optional narrator — produces more reliable and trustworthy output than pure AI generation.

Agent boundaries are design decisions

Deciding what each agent should and shouldn’t do was the hardest part. Getting the boundaries right made everything downstream — testing, debugging, auditing — dramatically easier.

Healthcare data standards are complex but essential

Working with FHIR/HL7 standards taught me that the hardest part of clinical AI isn’t the AI — it’s understanding and correctly handling the data.

EXPLORE

Want to see the code?

The full source code, architecture documentation, and sample reports are available on GitHub.